Caching Data

👤 This documentation is intended for Site Administrators and reviews different caching strategies that are available within the Cache and Warehouse Infrastructure options. For SQL Users looking to query the Cache, refer to the documentation listed here.

Table of Contents:

<UL>

<LI><a href="#WhatIsCache">What is the Sisense Cache?</a></LI>

<LI><a href="#CacheSummary">Summary of Caching Strategies</a></LI>

<LI><a href="#CacheStratDetails">Caching Strategies - Detailed Descriptions</a></LI>

<UL>

<LI><a href="#FullUpdates">Full Updates</a></LI>

<LI><a href="#IncrementalUpdates">Incremental Updates</a></LI>

<LI><a href="#BoundedUpdates">Bounded Updates</a></LI>

<LI><a href="#ModifiedUpdates">Modified Updates</a></LI>

</UL>

<LI><a href="#SuportedTableChars">Suggested Caching Strategies by Table Characteristics</a></LI> <LI><a href="#ImportCols">Import Columns</a></LI>

<UL>

<LI><a href="#CommonImportPitfalls">Common Import Column Pitfalls</a></LI>

</UL>

<LI><a href="#CacheTable">Caching a Table</a></LI>

<UL>

<LI><a href="#FullOpts">Full Update Options</a></LI>

<LI><a href="#IncOpts">Incremental Update Options</a></LI>

<LI><a href="#BoundedOpts">Bounded Options</a></LI>

</UL>

<LI><a href="#ReloadRemovePause">Reloading, Removing or Pausing a Table in the Cache</a></LI>

<LI><a href="#CacheStatus">Caching Status</a></LI>

</UL>

<HR>

<a name="WhatIsCache"></a>

What is the Sisense Cache?

The Sisense Cache is part of the Data Engine and is an add-on feature. Customers with the Data Engine add-on have the option of caching their data to either the Redshift Cache or the Snowflake Cache. This infrastructure is ideal for processing intensive queries and allows users to create Materialized Views for even more significant performance gains.

When Sisense for Cloud Data Teams caches a table, all of the table's rows are copied into the cache. Four different cache population strategies are used to keep the cache's version of a database table in sync with it's database counterpart. Many tables use multiple caching strategies in parallel on different schedules to maximize freshness and minimize differences.

Additionally, the power of the Sisense Cache can be utilized through Cross-Database Joins. For more information on Cross-Database joins, please refer to the documentation here.

Note: By default, column values are truncated to 1024 characters. This can be increased to 30000 characters on a column-by-column basis. To increase the truncation limit for a specific column, please reach out to the Sisense support team at [email protected] and specify the relevant database, schema, table, and column names.

<a href="#top">Back to top</a>

<a name="CacheSummary"></a>

Summary of Caching Strategies

Further details on each caching strategy can be found below.

<div><table><thead><tr><th style="width:200px;text-align:center"> </th><th style="width:200px;text-align:center">Full Updates</th><th style="width:200px;text-align:center">Incremental Updates</th><th style="width:300px;text-align:center">Bounded Updates</th><th style="width:300px;text-align:center">Modified Only</th></tr></thead><tbody><tr><td>Description</td><td align="center">Full table reload</td><td align="center">Load only newly added rows</td><td align="center">Load rows within a designated timeframe</td><td align="center">Load new rows and update modified rows</td></tr><tr><td>Requires import column</td><td align="center">Only for non-Redshift tables</td><td align="center">✓</td><td align="center">✓</td><td align="center">Requires unique ID column</td></tr><tr><td>Supported import column/Unique ID datatypes</td><td align="center">timestamps and integers</td><td align="center">timestamps and integers</td><td align="center">timestamps</td><td align="center">UUIDs or integers</td></tr><tr><td>Import column/Unique ID must be unique</td><td align="center">✓</td><td align="center">✓</td><td align="center">✓</td><td align="center">✓</td></tr><tr><td>Import column/Unique ID must be non null</td><td align="center">✓</td><td align="center">✓</td><td align="center">✓</td><td align="center">✓</td></tr><tr><td>Import column must be monotonically increasing</td><td align="center"></td><td align="center">✓</td><td align="center">✓</td><td align="center"></td></tr><tr><td>Additional requirements</td><td align="center"></td><td align="center"></td><td align="center"></td><td align="center">Timestamp field for when the row was last updated, Does not support row deletions</td></tr></tbody></table></div>

<a href="#top">Back to top</a>

<a name="CacheStratDetails"></a>

Caching Strategies - Detailed Descriptions

<a name="FullUpdates"></a>

Full Updates

This is the most basic and heavyweight option. A complete copy of the table is downloaded on a schedule and replaces the version in the cache. When pulling from a Redshift database, all rows will be downloaded in a one-time pull. All other database types would require a valid import column as highlighted below. This is the only caching strategy that captures any deletions of existing rows.

Every table starts with this strategy because at first the cache is empty and this is the most efficient way of transferring all of the rows. Full updates have high latency, but are necessary for tables that change throughout or for warehouses that get completely rebuilt as part of nightly processes.

<a name="IncrementalUpdates"></a>

Incremental Updates

For tables that do not change historical records, incremental updates are the obvious choice. As long as the table has a column with a monotonically increasing value (e.g. a numeric id or created_at timestamp) it's easy to grab the newest rows every few minutes.

The Incremental Updates strategy is frequently paired with Full Updates for tables that are growing and changing at the same time. On a typical users table, this combination of caching strategies gets new users into the cache very quickly, and occasionally updates all of the historical users in case their rows changed.

We grab the newest rows by comparing the maximum value of the import column between the cached and origin tables:

-- max value in the cache is 123456 select * from users where id > 123456;

<a name="BoundedUpdates"></a>

Bounded Updates

The third strategy is for large tables that only update recent rows. This is especially useful when the table is too large to regularly do a Full Update, and is not truly append-only.

We see this most often with tables that hold objects soon to be changed by a background process, like email and transaction logs tables. These rows get marked as successful or failed a few minutes after they are created.

Note: Bounded Updates are only supported on tables with a timestamp import column and the bound must be in terms of the import column.

Every time it's scheduled, the Bounded Updates strategy replaces the most recent N days of rows. The Sisense Cache rows are replaced by rows in the customer database that have an import column value after the start of the bound. With the Bounded Update strategy, there is a short period of time where the rows have been removed from the Sisense Cache but have not been replaced with the updated rows.

For example, a configuration that fetches and replaces the last 3 days of rows every day using a created_at import column. This strategy will first remove all rows in the Sisense cache where created_at is in the last 3 days. It will then import rows from the customer database where created_at is greater than the largest value that exists on the cache.

<a name="ModifiedUpdates"></a>

Modified Updates

The Modified-Only strategy is the fastest and most efficient caching strategy. The cache will only pull in updated or newly added rows. In order to do so, Sisense requires two valid import columns: one unique import ID which cannot be a timestamp and one modified_at timestamp column. Sisense first compares the modified_at timestamp column to the last time of import. All rows that have a value in the modified_at column greater than the last import will then be evaluated for their unique import IDs.

In the example below, the last import is 2017-01-01 01:02:03.123

select [all columns] from table where modified_at > '2017-01-01 01:02:03.123' order by modified_at ASC

Note: The "modified_at" column should be indexed to improve the performance of selecting the changed raws.

If the ID currently exists in the cached copy of the table, Sisense will update all column values for that ID. However, if the ID does not already exist, Sisense will write in a new row. Only recently added or modified rows will be queried from the source and merged into the cache.

Note: The import column must be unique in order to ensure the cached copy of the table is not corrupted.

The Modified Update caching strategy does not support row deletions. If deletions need to be simulated, rows must employ a "soft delete." In a soft delete, a table contains a deleted_at field. When the row needs to be "deleted," a timestamp is added to the deleted_at field, in turn updating the updated_at field. Analyses are then filtered on the condition where deleted_at is null.

<a href="#top">Back to top</a>

<a name="SuportedTableChars"></a>

Suggested Caching Strategies by Table Characteristics

<div><table><thead><tr><th style="width:400px;text-align:center">Table Description</th><th style="width:200px;text-align:center">Full Updates</th><th style="width:200px;text-align:center">Incremental Updates</th><th style="width:200px;text-align:center">Full + Incremental Updates</th><th style="width:300px;text-align:center">Bounded Updates</th><th style="width:300px;text-align:center">Modified Only</th></tr></thead><tbody><tr><td>Existing rows remain unchanged (append-only tables)</td><td align="center"></td><td align="center" valign="middle">✓</td><td align="center"></td><td align="center"></td><td align="center"></td></tr><tr><td>Only recently added rows are changed</td><td align="center"></td><td align="center"></td><td align="center" valign="middle">✓</td><td align="center" valign="middle">✓</td><td align="center" valign="middle">✓</td></tr><tr><td>Historical records are changed and a modified_at timestamp field exists</td><td align="center"></td><td align="center"></td><td align="center"></td><td align="center"></td><td align="center" valign="middle">✓</td></tr><tr><td>Historical records are changed and no modified_at timestamp field exists</td><td align="center" valign="middle">✓</td><td align="center"></td><td align="center">✓</td><td align="center"></td><td align="center"></td></tr><tr><td>Existing rows are deleted from the table entirely</td><td align="center" valign="middle">✓</td><td align="center"></td><td align="center">✓</td><td align="center"></td><td align="center"></td></tr></tbody></table></div>

<a href="#top">Back to top</a>

<a name="ImportCols"></a>

Import Columns

Aside from the Modified Only caching strategy and Full Only Updates on Redshift tables, all caching strategies require an import column. This is because Sisense for Cloud Data Teams grabs data in batches of 100,000 rows and re-queries the table until all rows have been acquired. To do this, Sisense for Cloud Data Teams requires use an integer or timestamp import column that is unique, non-null, and monotonically increases in value.

Common columns that work well as import columns include id columns that are auto-incrementing with new rows, and created_at timestamps that are unique and indexed.

<a name="CommonImportPitfalls"></a>

Common Import Column Pitfalls

The Sisense Cache Full, Bounded, and Incremental population strategies rely on import columns to find new rows, and can miss rows if the expectations are not met.

New rows are expected to have larger values than old rows. Non-integer IDs and UUIDs usually do not meet this requirement. Thus, import columns must be:

- Monotonically Increasing

- Unique

- Non-Null

<a href="#top">Back to top</a>

<a name="CacheTable"></a>

Caching A Table

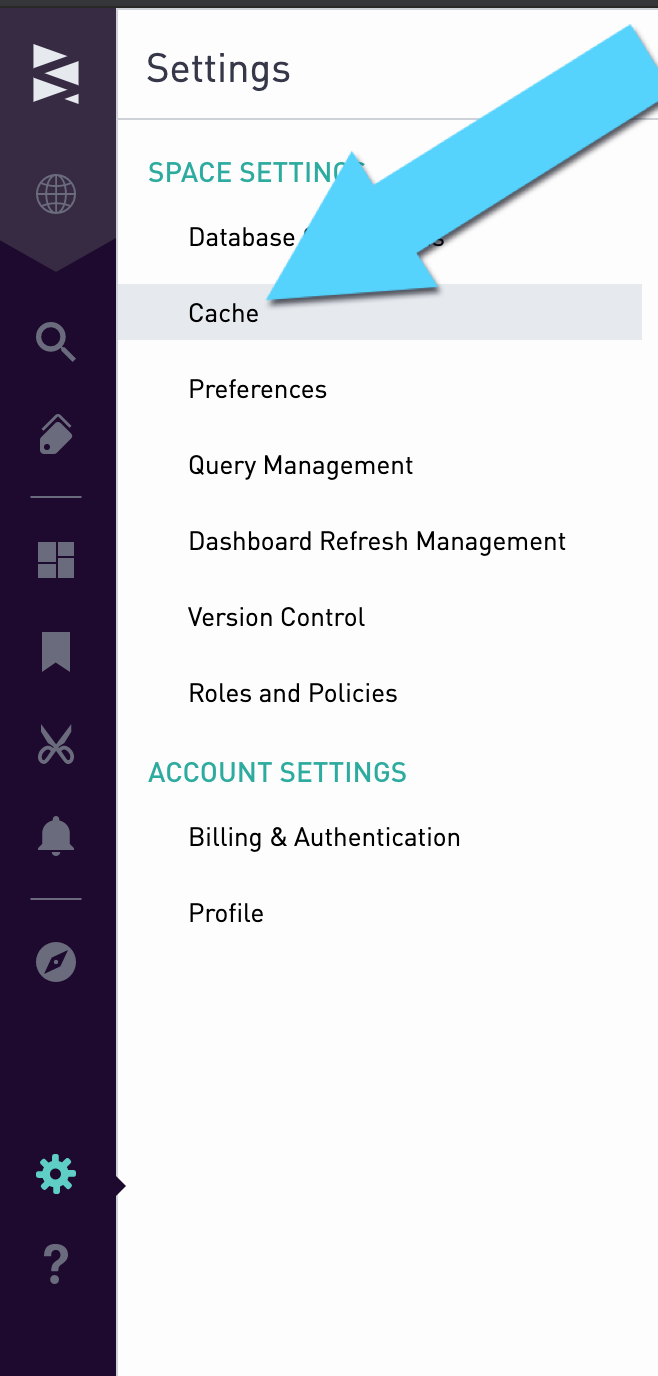

Admin users can add new tables to the Sisense cache, as well as edit current cache settings and remove tables from the cache. To start caching tables, navigate to the cache menu via the settings icon in the bottom left corner of the screen.

Open the caching menu for a table by clicking the row that corresponds to that table. Select the Caching Strategy from the dropdown that best fits the table as described in the strategies above.

After selecting a caching strategy, choose an import column from the dropdown. Click the question mark to find out more information on selecting the appropriate import column.

<a href="#top">Back to top</a>

<a name="#FullOpts"></a>

Full Update Options

The Full Update of the cache can be set to run at an interval.

Alternatively, the Full Update can be scheduled to run at specific time in UTC every day.

<a href="#top">Back to top</a>

<a name="IncOpts"></a>

Incremental Update Options

If an "Incremental" or "Full and Incremental" Caching Strategy has been selected, the Incremental Update interval can be set.

Once a table has been configured for the Cache, click "Save" to start the caching process.

<a href="#top">Back to top</a>

<a name="BoundedOpts"></a>

Bounded Update Options

If a "Bounded Updates" Caching Strategy is selected, both the update interval and update window will need to be selected. The interval is the frequency at which the updates will occur and the window is how far back from the current time the rows will be refreshed.

In the above screenshot, the annotations table has been set to perform bounded updates every 8 hours. When the bounded update runs, it will look back over the update window of 4 days from the current timestamp and reload that data into the cache.

NOTE: The cache sets type `numeric` (20,8)by default which gives you max length of 12 before decimal point. If `numeric` exceeds the max length, then harvest will fail. Please reference https://docs.aws.amazon.com/redshift/latest/dg/r_Numeric_types201.html

<a href="#top">Back to top</a>

<a name="ReloadRemovePause"></a>

Reloading, Removing, or Pausing a Table in the Cache

In the scenario that a full update of the table that is currently cached needs to be restarted, the "Reload" icon in the cache menu can be clicked.

If a table is no longer needed on the Cache, it can be removed using the "Uncache" button in the Cache menu.

To temporarily stop caching a table, the "Suspend Caching" can be turned on switch. This will stop any future caches of the table but keep the current cached version of the table stored. The cached version will remain available for querying.

<a href="#top">Back to top</a>

<a name="CacheStatus"></a>

Caching Status

If there is no info icon, it indicates a table is not yet cached and a caching strategy is not placed:

A grey dotted info icon indicates a table is in the queue to be cached or currently caching:

A green solid info icon indicates a table is cached successfully:

A grey dotted info icon with the status "Disabled" indicates a table is suspended caching on the first run:

A green solid info icon with the status "Disabled" indicates a table was cached successfully and is suspended caching:

A red solid info icon indicates a table that was previously cached failed to update its data during a subsequent refresh:

A red dotted info icon indicates a table failed to cache on the first run due to an error:

<a href="#top">Back to top</a>